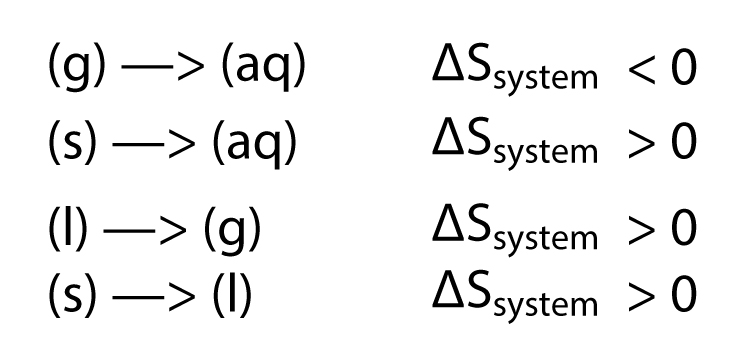

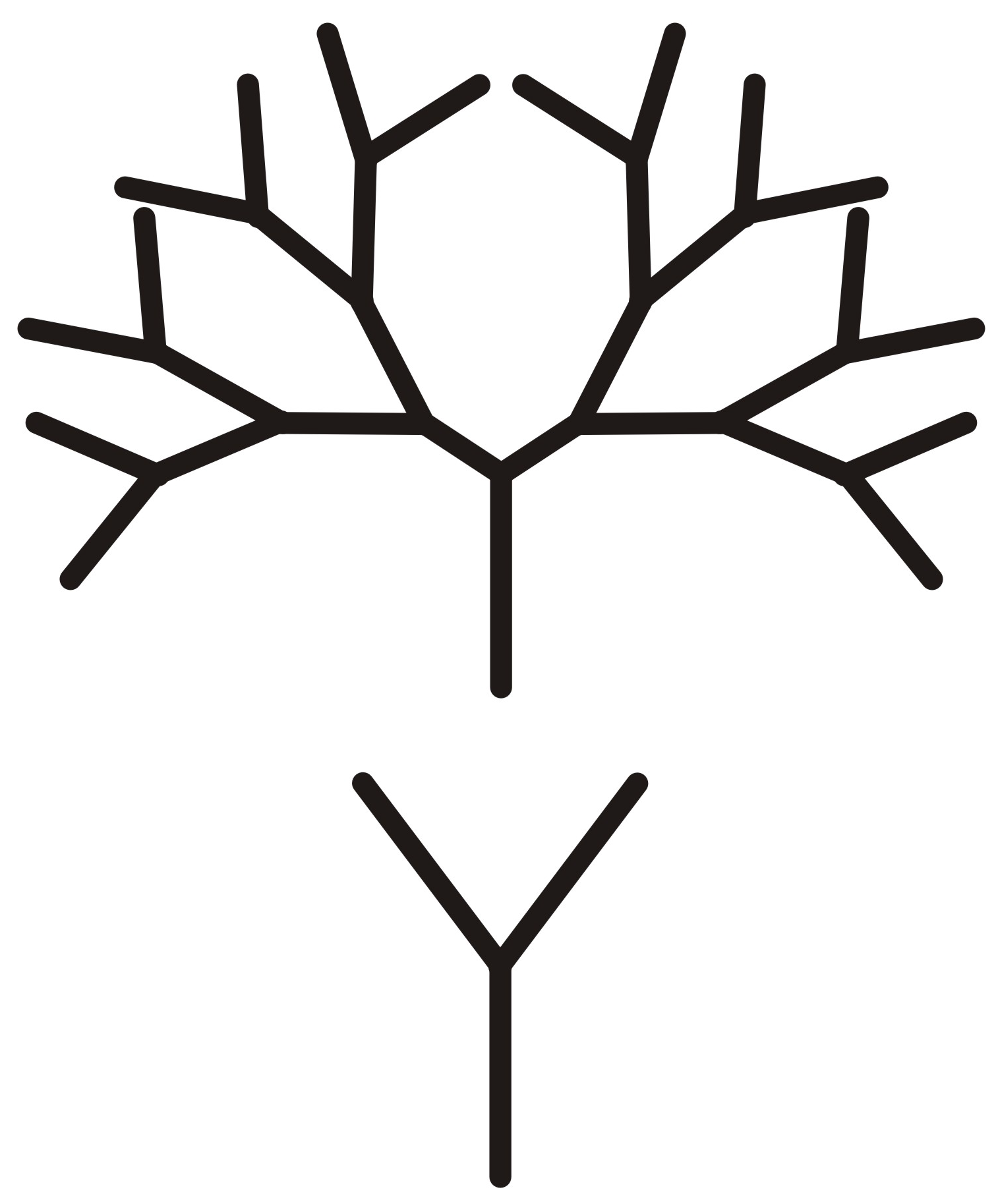

its formula) is deeply related to our intuition of how information should be split as we break a process into pieces. ABSTRACT: We discuss the principles of a High-Entropy Randomness Generator (also called a Hardware Random Number Generator. So we can see that Shannon entropy isn’t arbitrary. Let’s check that this matches Shannon’s formula. Hence, it suggests that temperature is inversely proportional to entropy. H(A, B, C, D) = (1 + 1/2 + 1/4) * H(1/2, 1/2). The entropy formula is given as S q rev,iso /T If we add the same quantity of heat at a higher temperature and lower temperature, randomness will be maximum at a lower temperature.H(B, C, D) = H(Decision 2) + 1/2 * H(C, D) = H(1/2, 1/2) + 1/2 * H(C, D) = H(1/2, 1/2) + 1/2 * H(1/2, 1/2). In thermodynamics, the etymology of term entropy, symbol S, derives from the Greek word (tropos), meaning transformation, coined by German physicist.We can split the entropy in steps like the following: Novel measures of symbol dominance (dC1 and dC2), symbol diversity (DC1 N (1 dC1) and DC2 N (1 dC2)), and information entropy (HC1 log2 DC1 and. The tree for these decisions looks like the following:

For example, consider if the source wants to send messages consisting entirely of symbols from the set. Shannon’s view of entropy is framed in terms of sending signals from one party to another. In equations, entropy is usually denoted by the letter S and has units of joules per kelvin (JK 1) or kgm 2 s 2 K 1. It is an extensive property of a thermodynamic system, which means its value changes depending on the amount of matter that is present. The classic textbook “An Introduction to Probability and Random Processes” by Gian-Carlo Rota and Kenneth Baclawski, which at the time of this writing is available in the open source collection on . Entropy is the measure of the disorder of a system.Claude Shannon’s classic original paper “A Mathematical Theory of Communication”.The purpose of this post is to record some of my musings on Shannon’s viewpoint of entropy.